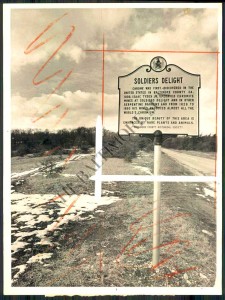

Click photos to enlarge.

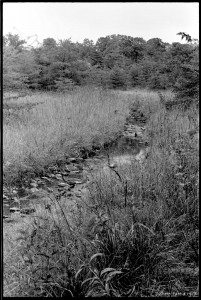

As a kid, my favorite thing about Soldiers Delight was playing in the streams. They were very different from all other streams I knew, which were muddy. In Baltimore County walking in a stream generally meant walking in mud. The water in Soldiers Delight streams was clear, and the stream bottoms were mostly stoney. The stream banks were also grassy and sunny. Streams elsewhere could be sunny, but even managed streams through pastures or parks were often lined with a thicket of woody plants. At Soldiers Delight long stretches of streams were lined only with tall grasses and wildflowers. Plus there were minnows and frogs and snakes. These were great streams. Continue reading “Buddles”